Evaluating UX Optimization with A/B Testing

Guessing costs a lot, especially when it comes to user experience and site monetization. There is ample evidence suggesting that even minor improvements can impact user experience and conversion rates. This is why choosing the right ad format, button color, or menu type can result in more pageviews, higher CTR, as well as increased sales or conversion rates. However, the question of how exactly you can make the right choice still stands.

Although there are ways to forecast the audience's reaction towards new features and design tweaks, this approach is time-consuming and costly. Moreover, such predictions often require elaborate research, yet the result will likely be quite far from 100% or even 80% accuracy. A/B testing is considered more reliable, straightforward, and economically feasible. Instead of making predictions, this method is based on the evaluation of actual changes in users' behavior.

A/B Testing Essentials

A standard A/B testing experiment is based on the comparison of two website versions. The most basic A/B testing scenario implies the comparison of an unaltered initial version to the one with the proposed new features. The latter is shown to a part of the target audience with its performance measured in real time. If the proposed improvements help achieve more views, clicks, or conversions, the new features will be adopted.

Of course, there are more complex split testing models with multiple site versions involved. Well-established Internet companies like Google and Amazon are known to constantly run multiple A/B testing experiments. Still, the key concept of such experiments remains the same: letting the target audience and data predetermine the important decisions.

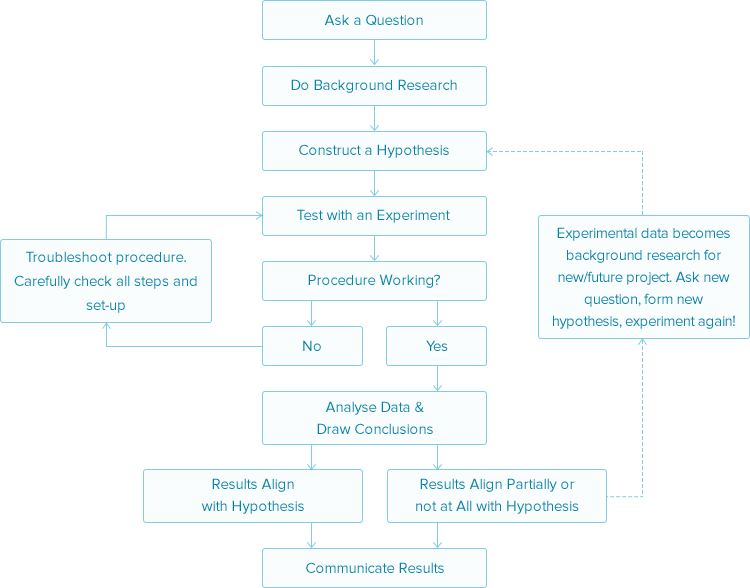

A great number of A/B testing case studies can serve as thought-starters for new experiments. However, you need to keep in mind that duplication of third-party solutions may produce misleading data. While working on a testing strategy, you will benefit from developing your own A/B testing framework based on the standard scientific method:

A/B Testing Tips

When applied correctly, A/B testing eliminates the guesswork from the UX optimization process. However, you need to reduce the information noise if you want your experiment to be effective. Achieving this goal requires following the recommendations outlined further:

- Be sure to reinforce your intuition with data, when choosing the features for further tests. Although brainstorming can provide you with some insights, more solid research methods are available. Usability tests help expose interface and design flaws while on-site surveys aid in defining user intent and objections.

- Test several versions simultaneously by splitting the traffic between them. It is viewed as a good practice to demonstrate the altered version to new visitors, so that the regular users won't be shocked by the design changes. Also, the same website version should be shown to regular visitors.

- Ensure that the change is consistent and implemented across the whole website, not just one page. This is especially relevant for design and interface elements and crucial for price policy testing. Displaying different variations will nullify the experiment efficiency and drive some of the visitors away.

- Avoid hasty conclusions. Make use of statistical confidence and test duration calculators to ensure that you have obtained enough data. These instruments are often incorporated into A/B testing software. Alternatively, online tools (e. g. the ones from getdatadriven.com or vwo.com) can prove useful.

Summary: Monetize Efficiently with A/B Testing

A/B testing is optimal to publishers, who are new to real-time performance testing. The combination of simplicity and efficiency makes this technique a must-have for your monetization toolkit. When conducting a test, be sure to follow framework of a standard scientific method and pay attention to the tips outlined above to make your experiments consistent and accurate.

previous post next post